Blog

The OCaml Planet RSS

Articles and videos contributed by both experts, companies and passionate developers from the OCaml community. From in-depth technical articles, project highlights, community news, or insights into Open Source projects, the OCaml Planet RSS feed aggregator has something for everyone.

Want your Blog Posts or Videos to Show Here?

To contribute a blog post, or add your RSS feed, check out the Contributing Guide on GitHub.

After the BBC/ITV media run we had a talk at Pint of Science, two cracking Part II dissertations on CE/TESSERA and OxCaml vector RAG, and put TESSERA v1.1 weights on HuggingFace.

Native OxCaml system packages for Debian/Ubuntu, Fedora, Arch and Homebrew — plus reviving a 2013 GPG key that modern tooling rejects for SHA-1, and using agentic coding to collapse the opam build into one tarball.

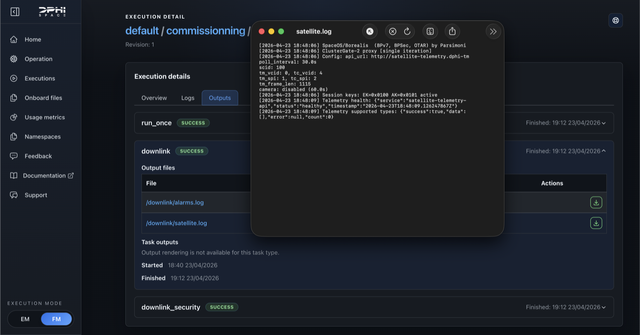

Consolidating my OCaml trees for easier OxCaml deployment, shipping native system packages for OxCaml which then got into space, and remembering Peter Neumann

On 23 April, Borealis booted in orbit on DPhi Space's ClusterGate-2: a pure-OCaml CCSDS protocol stack with end-to-end-encrypted command and control and post-quantum key rotation. OxCaml is what comes next.

An update on the voluntary AI disclosure proposal, digesting the security, quality and legal feedback, and some concrete next steps around maintenance intent, multi-repository tooling, and reputation.

The O in OCaml is for its object-oriented extension, but I needed a way to emulate the constraints of inheritance without it. This raised some interesting design questions about how to do it using only the core ML language.

A few days after retiring opam 2.0 from the build pipeline, ocaml-ci Jon noticed that some jobs were failing. I immediately concluded that the removal was to blame, but it wasn’t.